DataOps 3.0 or how to build end-to-end data workflows in 2020

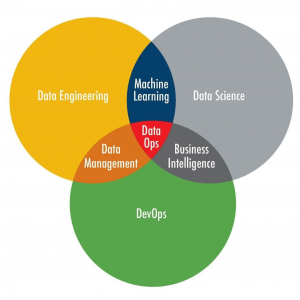

DevOps improves quality, velocity and scaling of software development. Borrowing methods from DevOps, DataOps offers the same improvements to production data science. Workflow management is a key element of the DataOps concept and serverless computing is a key feature of the DataOps 3.0.

This session includes both theory and practice of DataOps. We will show you how to implement best practices such as CI/CD, quality validation and reproducibility into your data science life-cycle. There are so many tools, libraries and frameworks available for DataOps that it is difficult to select the most suitable one. We will compare all popular workflow managers such as Airflow, Luigi, Azkaban, Pinball as well as cloud-native solutions like Step Functions and Simple Workflow Service (SWF). Finally, this session teaches you how to build workflows in Airflow, Luigi, and Amazon Web Services (AWS).

Speaker: Ruslan Korniichuk

Ukrainian Software and Artificial Intelligence Engineer living in Poland. Information Technology Architect at Capgemini. Former Python Developer and Data Engineer at Fortune 500 companies. Core areas are Software Development and Cloud Computing. Especially interested in Artificial Intelligence.

A history of success in diverse industry sectors including Manufacturing, FMCG, Healthcare, Banking, Education, and Startups.

PhD student. Taught Information system design and server-side technologies at the University of Silesia. Public speaker at PyCon PL, AWS User Group, CodeteCON, Silesian AI, Data Science Silesia.